Meta Strikes Major AI Deal with Amazon Web Services, Embracing Graviton Chips in Cloud Computing Shift

By [Your Name]

April 28, 2026

In a strategic move that underscores the intensifying battle for dominance in cloud-based artificial intelligence (AI), Meta has inked a landmark agreement to deploy millions of Amazon Web Services (AWS) Graviton processors to power its expanding AI workloads. The deal, announced Friday, marks a significant coup for AWS as it seeks to fend off rivals like Google Cloud and Microsoft Azure while proving the mettle of its in-house silicon.

The partnership signals a broader industry shift toward specialized, cost-efficient chips for AI inference—the process of running trained AI models—as companies seek alternatives to the expensive, power-hungry GPUs traditionally used for training. While Nvidia remains the undisputed leader in AI hardware, Amazon’s Graviton processors, built on ARM architecture, are emerging as a compelling option for enterprises looking to optimize performance and reduce costs.

The Graviton Advantage: Why Meta Chose AWS

AWS Graviton, now in its fifth generation, is a custom-designed ARM-based CPU tailored for cloud computing efficiency. Unlike GPUs, which excel at training massive AI models, CPUs like Graviton are increasingly favored for inference tasks—real-time AI operations such as generating text, executing code, or managing multi-step agent workflows.

Meta’s decision to leverage Graviton reflects a growing industry trend: as AI agents become more sophisticated, the demand for high-throughput, low-latency processing is surging. AWS has positioned Graviton as an ideal solution, boasting superior price-performance ratios compared to traditional x86 chips from Intel and AMD.

“AI workloads are evolving beyond just training,” said an AWS spokesperson. “With agents handling complex reasoning and coordination, enterprises need processors optimized for inference at scale. Graviton was engineered precisely for this shift.”

A Strategic Blow to Google Cloud

The deal is particularly notable given Meta’s recent $10 billion, six-year cloud agreement with Google Cloud, signed in August 2025. Until then, Meta had primarily relied on AWS, with supplementary services from Microsoft Azure. Industry analysts suggest that while Google Cloud secured a major win last year, Amazon’s latest coup demonstrates the fierce competition in the cloud sector, where customer loyalty is fluid and vendors aggressively court high-profile clients.

AWS’s timing was also telling—the announcement came just as Google Cloud Next, the company’s flagship conference, concluded. During the event, Google unveiled its latest custom AI chips, the Tensor Processing Units (TPUs), designed to rival Nvidia’s offerings. Amazon’s move appeared calculated, reinforcing its position as a formidable competitor in the AI infrastructure race.

The Broader AI Chip Wars

The cloud and semiconductor industries are undergoing a seismic transformation as tech giants invest billions in proprietary silicon to reduce reliance on third-party suppliers like Nvidia. Amazon, Google, Microsoft, and even Meta itself are pouring resources into custom chips, each vying for an edge in performance, efficiency, and cost.

Amazon’s AI hardware portfolio includes not only Graviton but also Trainium, its AI accelerator chip optimized for both training and inference. However, Trainium’s availability has been constrained by another blockbuster deal: Anthropic, the AI startup behind Claude, recently committed to spending $100 billion over a decade on AWS infrastructure, with a heavy emphasis on Trainium. In return, Amazon increased its investment in Anthropic to $13 billion, further solidifying the partnership.

Meanwhile, Nvidia, the dominant force in AI hardware, is not standing still. The company recently launched its Vera CPU, an ARM-based processor targeting AI agent workloads—a direct challenge to Graviton. Unlike AWS, Nvidia sells its chips directly to enterprises and cloud providers, including Amazon itself. This layered competition underscores the complex dynamics of the AI infrastructure market, where vendors are simultaneously partners and rivals.

Amazon’s Aggressive Posture in the AI Arms Race

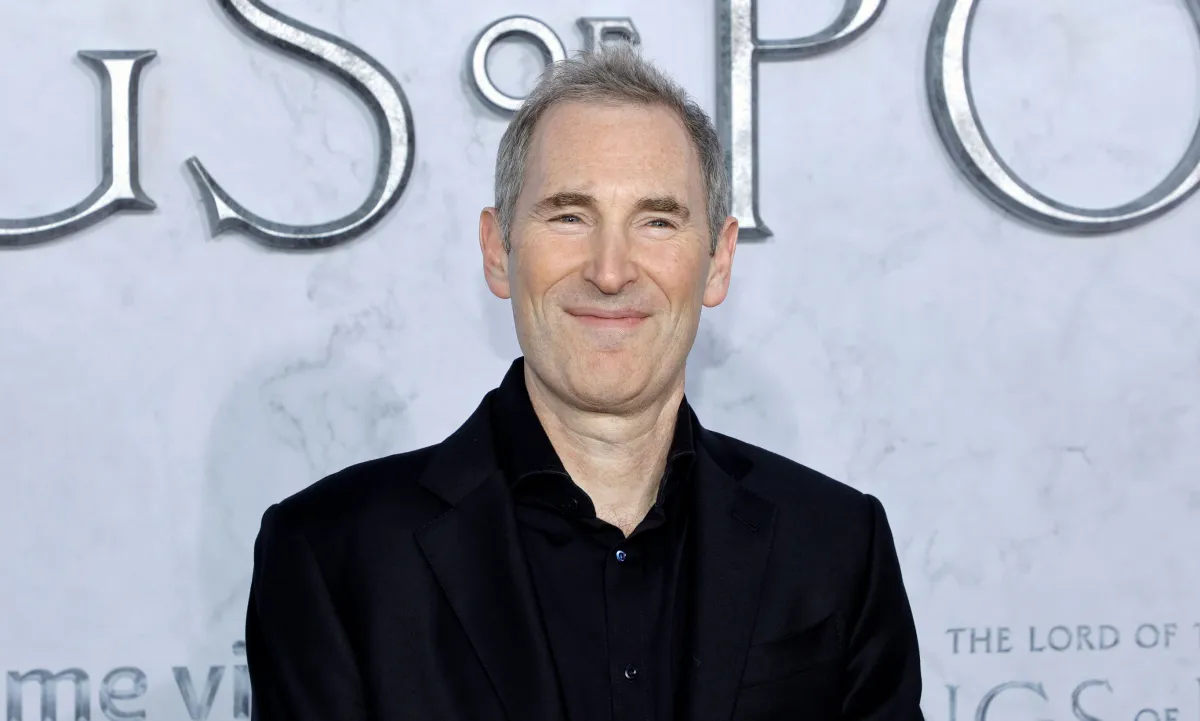

Amazon CEO Andy Jassy has made no secret of his ambitions to disrupt the AI chip hierarchy. In his annual shareholder letter earlier this month, he took direct aim at Nvidia and Intel, arguing that enterprises are demanding better price-performance ratios and that AWS intends to win on that basis.

“The era of overpaying for AI compute is ending,” Jassy wrote. “Customers want efficiency, scalability, and cost-effectiveness—not just raw power.”

Behind the scenes, Amazon’s chip development team faces immense pressure to deliver. A recent exclusive tour of AWS’s Trainium lab revealed a highly secretive operation where engineers are racing to refine next-generation AI processors. The Meta deal serves as a critical validation of those efforts.

What This Means for the Future of Cloud AI

The Meta-AWS partnership is more than just another cloud contract—it’s a bellwether for the evolving AI infrastructure landscape. As AI applications diversify beyond training, companies are reassessing their hardware strategies, balancing GPU power with CPU efficiency. AWS’s ability to secure Meta as a Graviton customer could accelerate adoption among other enterprises, further eroding Nvidia’s near-monopoly.

Yet challenges remain. Nvidia’s ecosystem, including its CUDA software framework, remains deeply entrenched in AI development. Additionally, Google and Microsoft continue to innovate with their own silicon, ensuring that the cloud wars will only intensify.

For now, Amazon has secured a high-profile endorsement of its homegrown chips. But in the cutthroat world of AI infrastructure, today’s victory is merely a prelude to tomorrow’s battle. As one industry analyst put it: “The race isn’t just about who has the best chips—it’s about who can deliver the most value at scale. And that war is far from over.”